Why Does Your Team Keep Shipping and Missing?

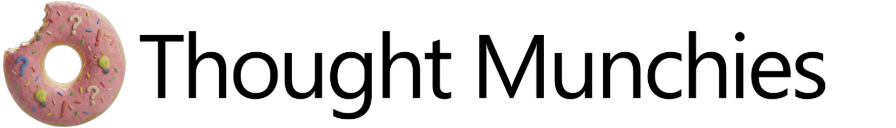

Your team shipped. The metrics are flat. The retro points at execution. Again. The failure didn't start there.

I have sat in both rooms.

The engineering retro and the product post-mortem. Different vocabulary, different teams, different problems on the table. But the physics of the failure are always the same.

On the Product side, the launch was successful but the metrics are flat. On the engineering side, the technical debt was paid, but nobody knows what that means for business.

This then gets justified by statements like:

"It takes time for users to change their behavior." — PM

"This code is easy to maintain now." — Devs

Except for a dopamine hit of delivering the stuff on the roadmap and giving well-deserved kudos to everyone who worked late to make the launch possible, there is not much to show.

Everyone talks about valuing Outcomes over Outputs, but no one is sure how to debug when things don't go as planned.

The team may run a retrospective, identify root causes like a rushed rollout, unclear messaging, or scope cut close to the launch, and commit to better sprint planning, clearer briefs, a proper launch checklist.

But six months later, when they ship again with "better processes" and "tighter execution, the metrics are still flatlined like a patient who's past the point of resuscitation.

The Causal Stack

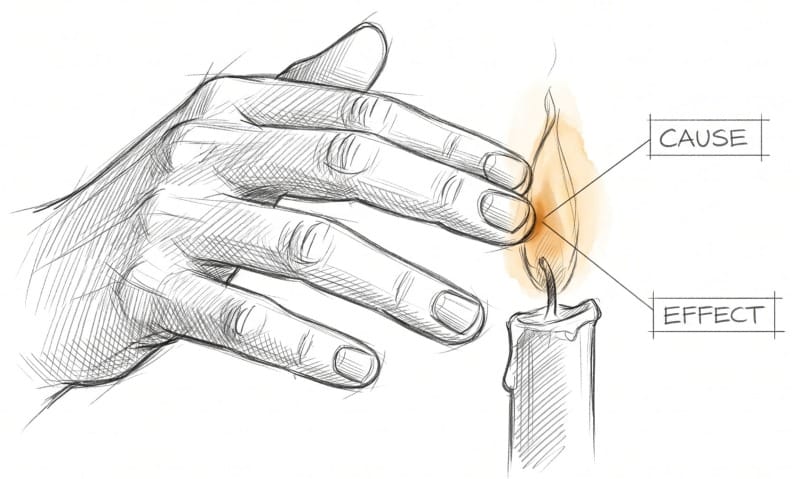

When you're having a heart attack, your left arm hurts.

Not your chest. Not your heart. Your arm.

It's because the heart and the left arm share the same nerve pathways into the brain. When the heart is starved of oxygen and sends out distress signals, the brain receives them, but it can't pinpoint the exact source. It knows the signal came from somewhere in that network. So it does what brains do with ambiguous information: it makes a guess. The guess is the arm.

The arm is fine. The heart is dying. The pain is real. The location, wrong.

Nearly 40% of heart attack patients experience no chest pain at all. Just the arm. Or the jaw. Or an unusual fatigue that feels like flu. With no obvious chest signal to follow, many of them visit an orthopaedic doctor, or worse, take an over the counter medicine for the fatigue. And the real problem goes untreated.

Product and engineering failures work the same way. By the time a failure shows up in a metrics review, a missed launch, or a flatlined adoption curve, the decision that caused it is somewhere upstream, in the past.

To get to it, you need to rewind. There are three layers where a failure can originate. Together they form what I call The Causal Stack.

The Stack, Layer by Layer

The Bet Layer: Are we solving the right problem?

If we solve this problem, this outcome will follow.

For engineering teams, this is the tech strategy (or the absence of one). Engineers are trained to fix things. Measuring how a fix actually moves the needle is rarely part of the job description.

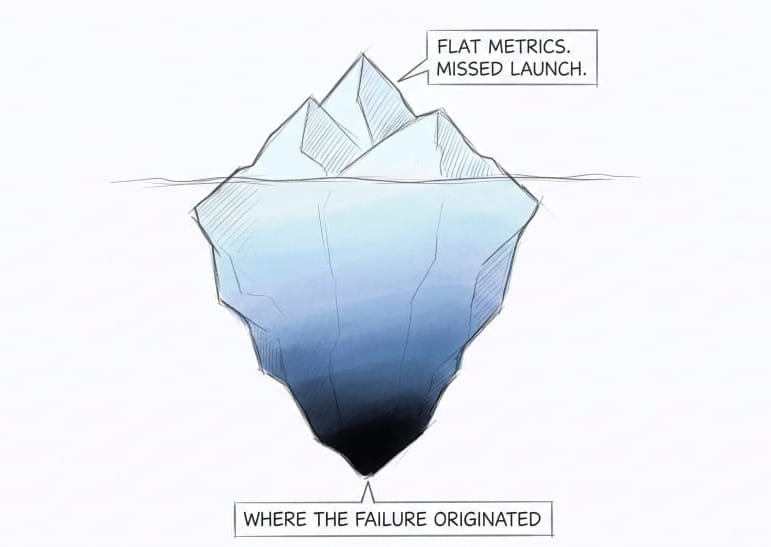

A common Product failure looks like this: a team bets that simplifying onboarding will improve market share, assuming friction is the primary reason users aren't converting.

That assumption is never tested. The bet is built on a feeling dressed up as a hypothesis. If users who complete onboarding are already converting well, the friction isn't the problem. The right customers aren't entering the funnel in the first place. The bet was pointing at the wrong stage entirely.

Similarly, a common engineering failure in this layer looks like this: A team bets that breaking a monolith into microservices will improve deployment velocity, assuming codebase complexity is the primary reason shipping is slow. That assumption that the architecture is the bottleneck is never tested. The bet is built on frustration dressed up as a technical strategy. If features still require manual cross-team coordination to launch, the problem isn't the monolith. It's the release process. The bet was pointing at the wrong door.

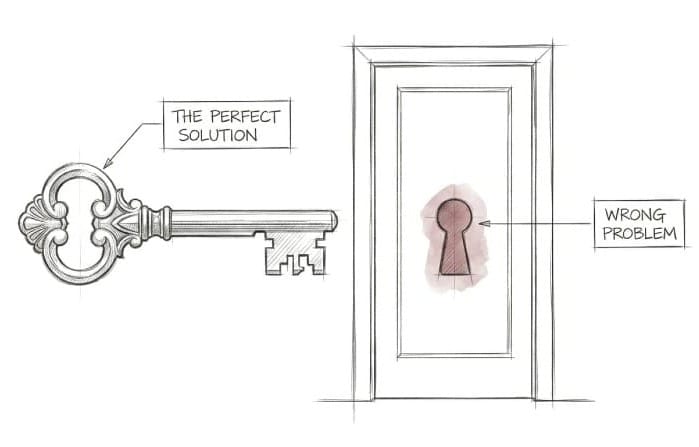

The Roadmap Layer: Are we prioritizing the right solution?

This is where you decide how to pursue the bet. For product teams, this is solution selection. Which feature, which approach, which trade-off. For engineering teams, this is the prioritization of debt and technical work. Without a clear bet as a north star, prioritization defaults to the loudest voice or the easiest ticket.

A roadmap failure often hides behind a validated bet. The customer problem is real, the bet is correct, but the solution chosen to pursue it doesn't actually solve what was validated. A team building a new onboarding flow chooses to remove steps because it's faster to build, when the drop-off was actually caused by users not understanding the product's value before committing. Removing steps changes nothing. The right problem was identified. The wrong solution was prioritized.

For engineering teams the same pattern shows up in debt prioritization. A team identifies that slow deployments are hurting product velocity. But instead of fixing the flaky test suite that's causing most of the delays, they spend the quarter refactoring a service that engineers find frustrating but that nobody actually waits on. Right area. Wrong problem within it.

The Execution Layer: Are we building and shipping it right?

This is where you build and ship. This is the layer everyone can see: code written, designs finalized, launch week. For product teams, execution failures look like underestimated scope, discovery that didn't happen, and a solution that shipped incomplete because the complexity wasn't understood before the build started.

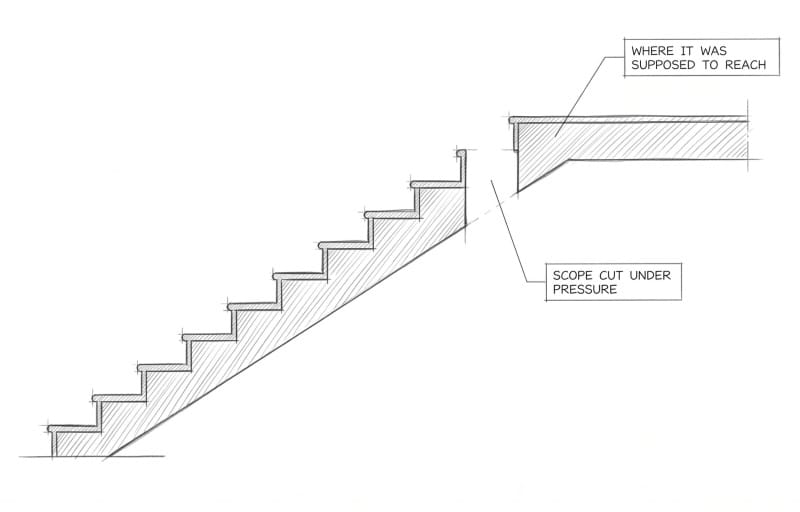

The leadership committed to a timeline before the team mapped the work. The sprint starts. The complexity surfaces. Scope gets cut under pressure. What ships is a thinner version of what was designed.

For engineering teams the same failure looks slightly different but comes from the same place. A team takes on a technical initiative without breaking it down properly. Midway through, the work proves harder than expected. Corners get cut. The implementation is technically complete but fragile. It works until it doesn't. And the team is back in the next sprint fixing what they rushed in this one.

Nobody deliberately ships something incomplete. Execution failures at this layer are almost always a planning failure in disguise.

Why You Keep Looking in the Wrong Place

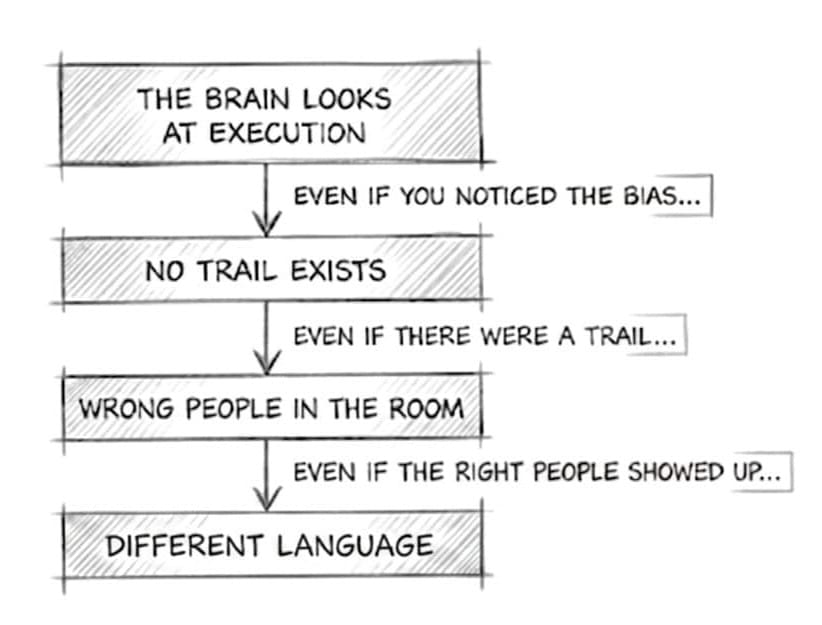

Because four forces work together to make it impossible to investigate the right layer.

- The brain reaches for the nearest cause, so the investigation starts in the wrong place.

- Even if you noticed the bias, the assumption left no trail, so there's nothing to examine.

- Even if there were a trail, the people who made the bet aren't in the room, so nobody can answer for it.

- Even if they were, the layers use different terminology, so the diagnosis never reaches the right layer.

1. The Brain Blames the Nearest Thing

We blame the most recent action because the original decision is buried in the past.

The human brain is wired to find causes closest to effects. You touch fire, your hand burns. The cause and the consequence arrive together, and the brain learns the pattern. This is useful. This is how we survive.

Complex systems break this wiring.

In a product organization, a decision made in January can cause a metrics failure in April. Three months of work sit between the cause and the consequence. By the time the failure surfaces, the original decision is buried under so many subsequent choices it has become effectively invisible. The brain looks for a cause. It finds the most recent thing. It assigns blame there.

2. No Paper Trail

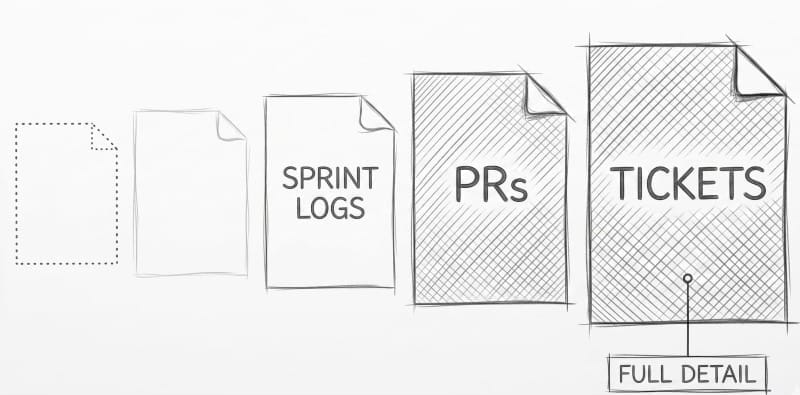

Execution leaves a clear paper trail. Strategic assumptions leave nothing.

Execution failures have clear owners. The engineer who estimated the work, the PM who cut scope, the designer who signed off on the flow. The retro format is built to surface exactly these decisions. They are documented in tickets, in Slack threads, in the sprint log. They are discussable because they are locatable.

Bet-layer failures differ in their fundamental structure, not just their timeline.

Strategic assumptions don't get made in a single meeting. They emerge gradually, across early conversations, in the space between what was said and what was heard, until they become so embedded in a project's logic that questioning them would feel like questioning the leadership itself. By the time a team is deep in execution, the assumption isn't even visible anymore.

Even when people are willing to examine it, there is nothing concrete to examine. No ticket to pull up. No decision log to reference.

You can only audit what left a trail.

The trail is missing but even the thing that should have left a trail may never have been agreed upon. Two people walked out of the same meeting with different beliefs about what was decided. Neither checked.

3. The Right People Aren't in the Room

The team runs retro but the right people aren't even in the room.

The retro convenes the people who did the work. The people who set the original direction, the leadership, whoever placed the bet, are rarely present.

This matters because the Causal Stack maps to the org chart. Each layer is owned by a different tier of the organization and each tier has a different level of authority to examine it.

The Bet layer is owned by different people than the Execution layer. A team can only interrogate the decisions it made. The decisions that happened above them are not theirs to examine.

So the meeting does what any meeting does: it works with the people present. The people present own execution. The bet stays unexamined because the person who could answer for it was three floors up and three projects further along.

4. The Layers Don't Speak the Same Language

Engineering talks complexity. Leadership talks outcomes. The translation doesn't happen automatically.

Even if the right people were in the room, the conversation would face a different problem.

Each layer of the Causal Stack has its own vocabulary.

- The execution layer speaks in sprints, scope, and tickets.

- The roadmap layer speaks in trade-offs and solutions.

- The Bet layer speaks in market hypotheses and strategic direction.

When a concern surfaces in one layer, it rarely lands in the language of another.

Developers say a service is fragile, hard to work with, accumulating risk. What they mean is that this debt is making it impossible to test the bets the product team is placing. The experiment can't run. The feature that should take three weeks will take three months. The bet can't be validated because the infrastructure can't support it.

The case was made in engineering terms. The impact was never translated into the outcome layer where prioritization decisions get made. So the debt stays. The slowdown compounds. The same conversation happens every quarter in the same language and receives the same answer.

What Now

The Causal Stack doesn't tell you what to fix. It tells you where to look, and who needs to be in the room when you do.

There is a question that does exactly that. Asked before the retro starts, it routes the conversation to the right layer with the right people. It reframes the entire post-mortem in a single sentence.

That question, and what to do with the answer, is where this continues.